Educatius Case Study

Centralised Review & Feedback System

01

Context

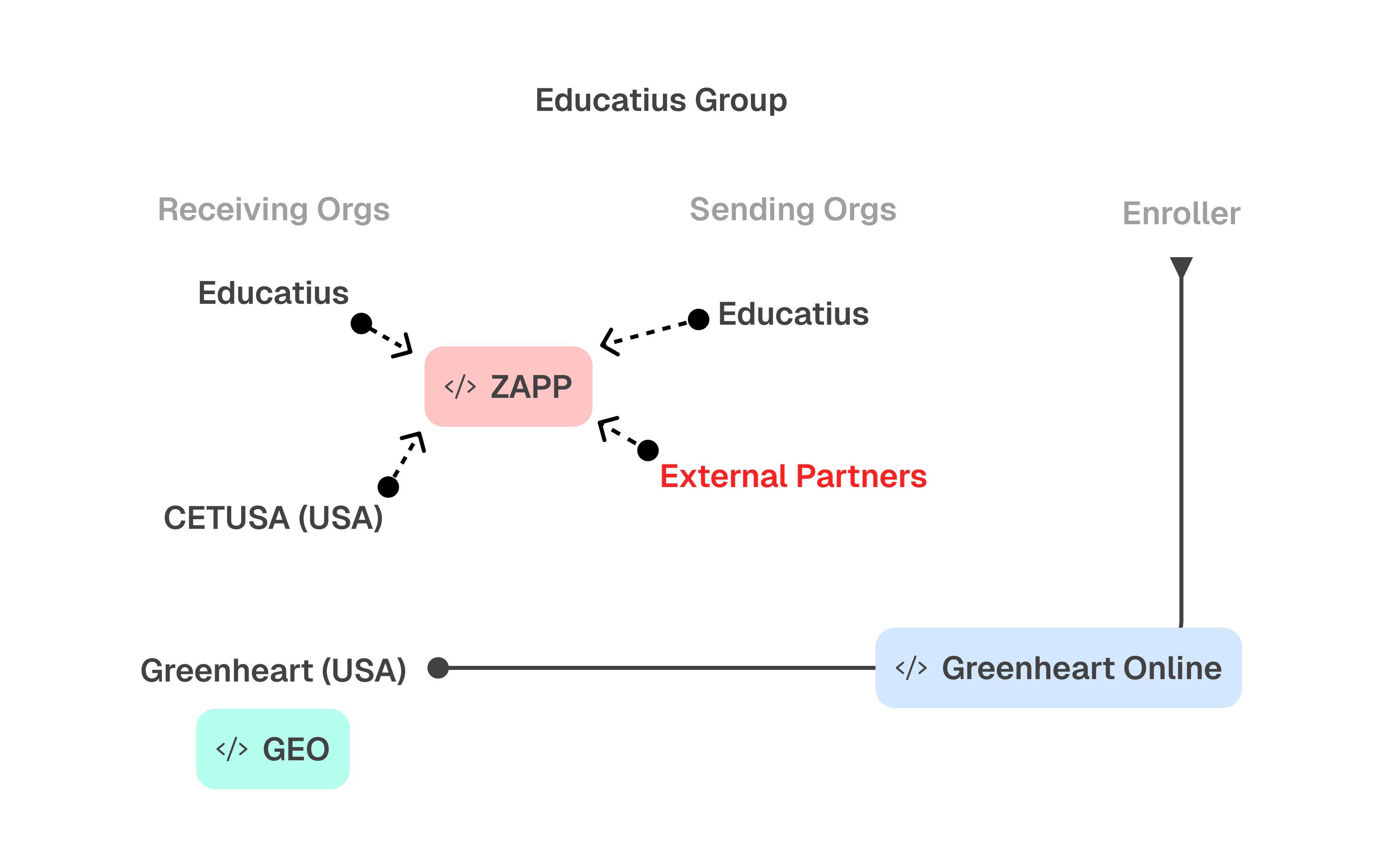

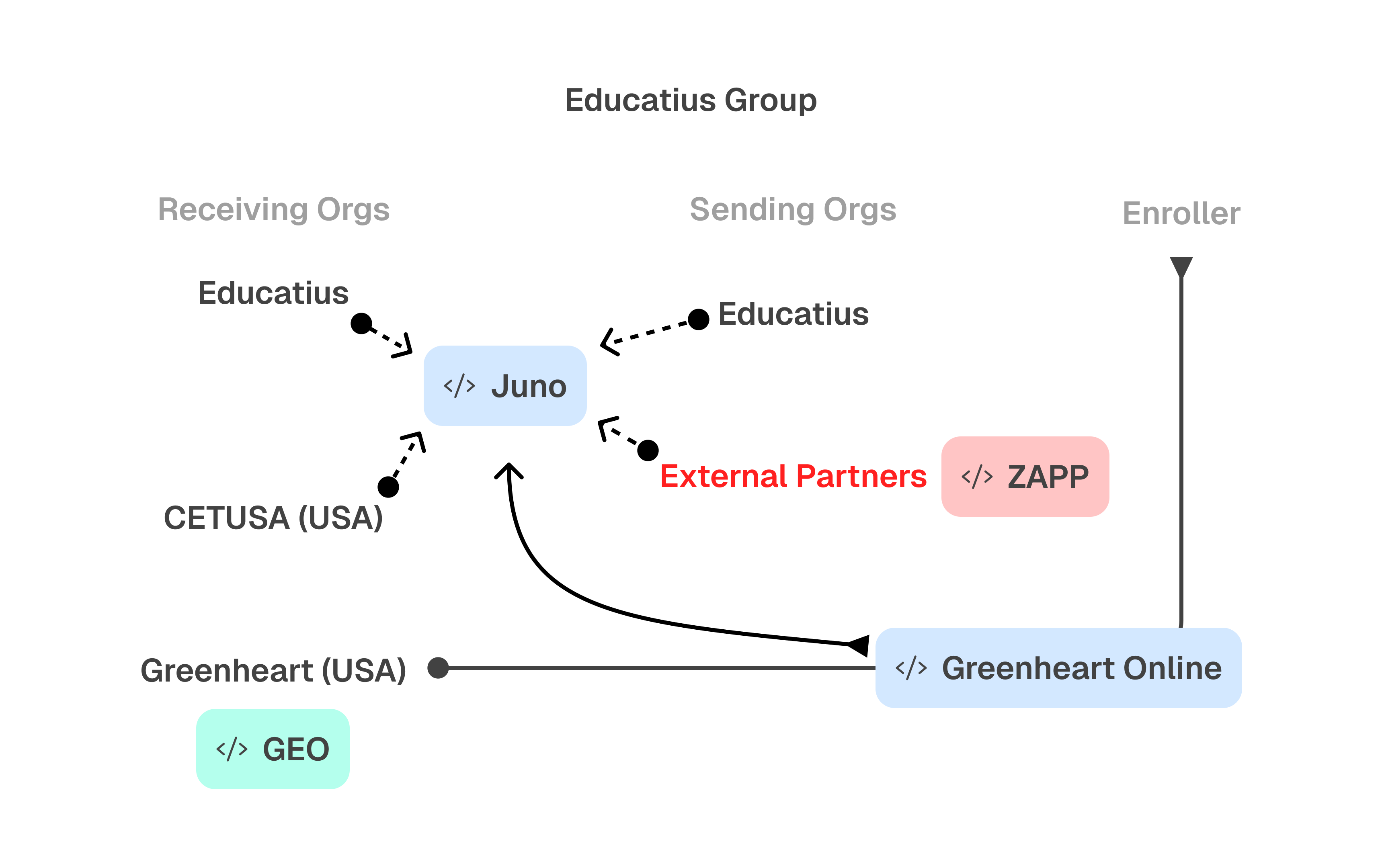

Educatius Group is a Swedish education company with multiple subsidiaries worldwide, all in the business of international exchange programmes. These subsidiaries operate as internal partners to each other - separate businesses that collaborate across the group. There are also external partners in the same industry but outside the Educatius group. Participant applications flow between all these partners throughout the recruitment and placement process.

Greenheart Exchange, a not-for-profit subsidiary based in Chicago, operates as a visa sponsor for exchange participants - students, interns, and holiday workers - managing them from recruitment through to departure from the US. Greenheart had an existing system called GEO and put out an RFP to replace it. Enroller, the group’s technology subsidiary, won that contract. The broader vision was to consolidate all subsidiaries and partners onto one platform.

The first product delivered was for the CAP programme (Cultural Ambassador Program - training and internships). This is where I designed the feedback system. The second phase was the High School Program, where the system was improved.

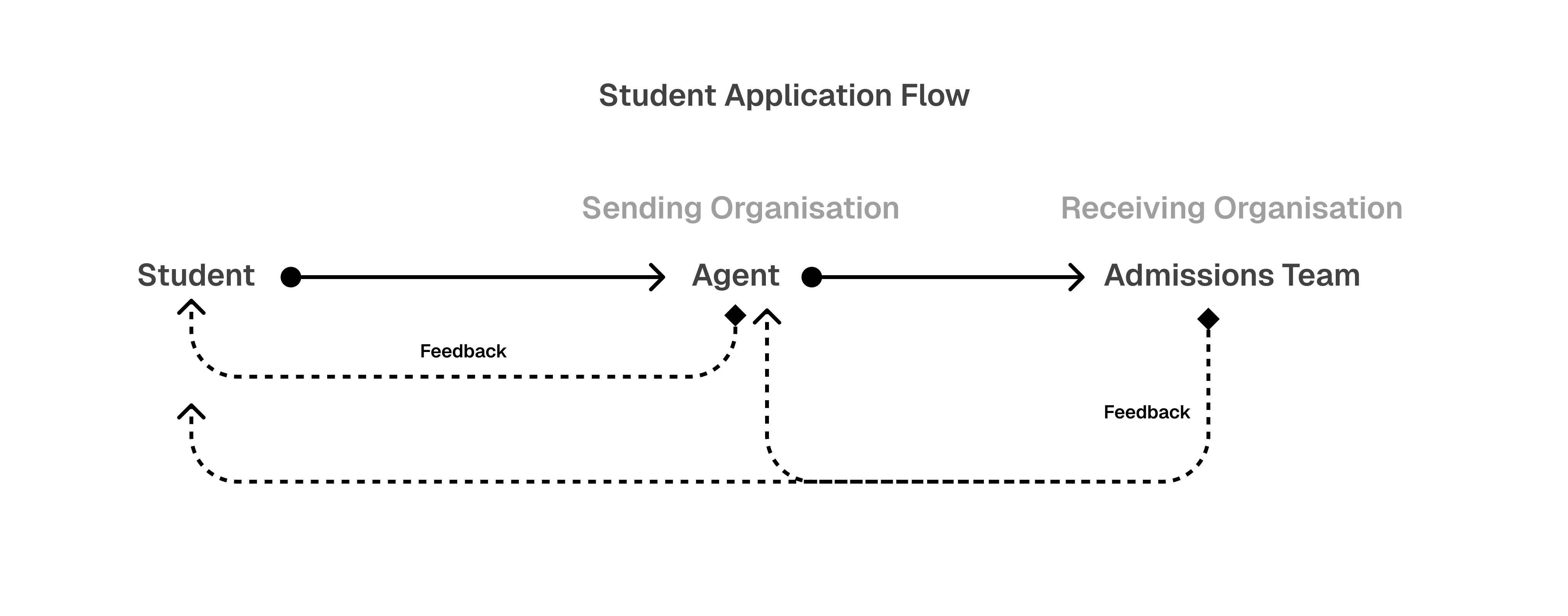

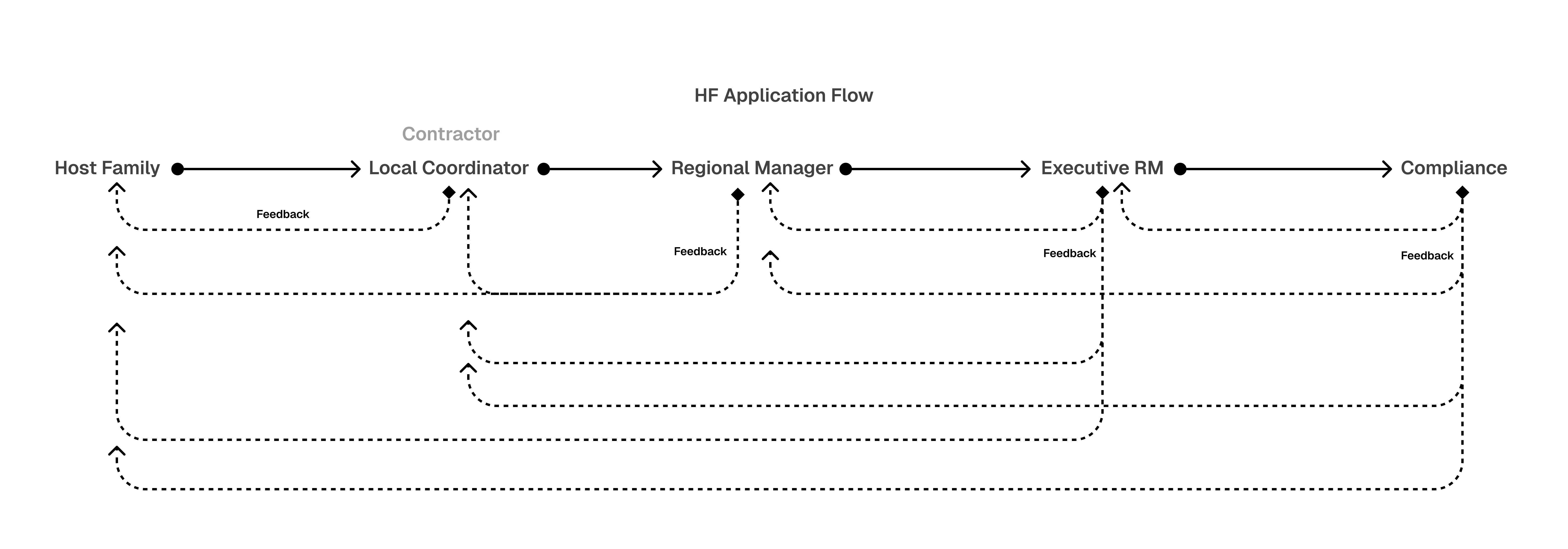

Participant applications were living documents - they could be submitted before being fully complete because participants needed time to gather documentation, complete medical tests, get vaccines, and more. Applications were worked on progressively until cut-off dates. The users working on these applications varied significantly: some partners offered to manage applications on behalf of participants as a paid or core service, while others did not - meaning individual participants (students, interns, holiday workers) interfaced directly with Greenheart. Local Coordinators were also involved during recruitment.

Before this system, driving applications from partial to complete was entirely manual. Greenheart staff chased partners and participants over email with no structured visibility - nobody could see at a glance what was incomplete, who was responsible, or what specific action was needed. My constraints were delivery timeline pressure, technical limitations of the existing platform, and being the sole designer.

02

Information Architecture

The system was built on a hierarchical review model. Applications moved upward through levels: participant, to partner or Local Coordinator, to Greenheart. Partners and Local Coordinators worked on applications they were directly responsible for. Greenheart sat above all partners as the central reviewing authority, reviewing every application regardless of its source. Even within Greenheart, there were internal levels of approval before an application was accepted into the system.

The application form itself was section-based - personal information, documents, medical records, and more - with individual fields within each section. The core of the design was the feedback mechanism: Greenheart reviewers could add field-level comments with specific feedback on specific fields. When feedback was sent back from any level, it was visible to everyone below that point in the chain. If Greenheart flagged a field, both the partner and the participant could see it.

Anyone in the chain could act on the feedback - whoever was best positioned could edit the field and send the application back up without waiting on others. When a field value was changed, it carried a visual “changed” indicator so the reviewer could immediately see what had been updated. The original comment remained visible under the field, giving the reviewer full context on what was requested. The reviewer could then either approve the overall application or add further comments to the same or different fields.

A dashboard with an application progress section notified users about incomplete sections and fields where feedback had been received. Notification emails were also sent when feedback was added, keeping all stakeholders informed without requiring them to check the system constantly.

Alternatives considered:

The field-level feedback mechanism itself was a confident design decision early on. The real challenge - and where alternatives were explored - was in making it work across a multi-level hierarchy. How should feedback cascade between levels? Who should see what? Who should be able to edit? These questions drove the iteration between CAP and HSP implementations.

Where complexity showed up:

The same interface had to serve professional partners managing dozens of applications and individual participants navigating their own. These are fundamentally different users with different levels of expertise, different motivations, and different tolerances for complexity. Solving this through visual indicators that didn’t require domain knowledge and progressive disclosure - layering complexity so basic users weren’t overwhelmed while power users could access deeper detail - was the hardest part of the information architecture.

03

Designs

04

Low-fi to Final Mockups

The design process started by mapping the full workflow - where data entered the system, where it stalled, who touched it at each stage, and what each stakeholder needed to do their job. This revealed the hierarchical review model as a natural reflection of how the partner ecosystem actually operated.

A key design tension emerged early: the same interface had to serve both professional partners managing dozens of applications and individual participants navigating their own. This was addressed through visual indicators that didn’t require domain knowledge and progressive disclosure - layering complexity so that basic users saw what they needed without being overwhelmed, while power users could access deeper detail.

The structure evolved significantly between the CAP and High School Program implementations, with each iteration refining how feedback was surfaced, how users navigated between sections, and how the system communicated what needed attention.

.png)

05

Key Decisions

The feedback system involved several critical design decisions, each with real trade-offs. These weren’t theoretical exercises - they were shaped by delivery pressure, technical constraints, and the realities of a multi-stakeholder ecosystem.

Key Decision

Cascading field-level feedback over application-level approval

Trade-off

Advocating for nonlinear navigation after a developer compromise

Insight

Building it twice: CAP limitations resolved in the High School Program

Key Decision

What was intentionally excluded

06

Retrospective

This system was built two times - for CAP, and then the High School Program - the second iteration was informed by real usage and real failures from the one before. That rare opportunity to revisit and refine shaped how I think about design iteration today.

What worked well

- • The cascading feedback model eliminated the manual email-chasing process entirely. Feedback was contextual, specific, and visible to everyone who needed to act on it.

- • Making incompleteness visible at the field level shifted the burden from humans chasing humans to the system guiding people through what was needed.

- • The hierarchical review structure reflected how the partner ecosystem actually worked, so adoption was natural rather than forced.

What I’d refine

- • Analytics on feedback patterns - understanding which fields got flagged most frequently and which partners had the highest rejection rates. This data would have revealed systemic issues: if passport scans were flagged 40% of the time, maybe the upload guidance needed improving rather than relying on the feedback loop to catch it every time.

- • More user research upfront - the CAP to HSP improvements were driven by real usage, which worked, but catching those issues through research before they hit production would have been more efficient and less costly to resolve.

What I’d test or validate next

- • Whether the HSP field-locking was too restrictive. Locking uncommented fields during feedback rounds was a sensible constraint - it prevented users from changing things the reviewer hadn’t flagged, avoiding a full re-review. But it may have created friction for users who legitimately needed to update other fields at the same time. I never got to validate whether that balance was right.